Research

Research Interests

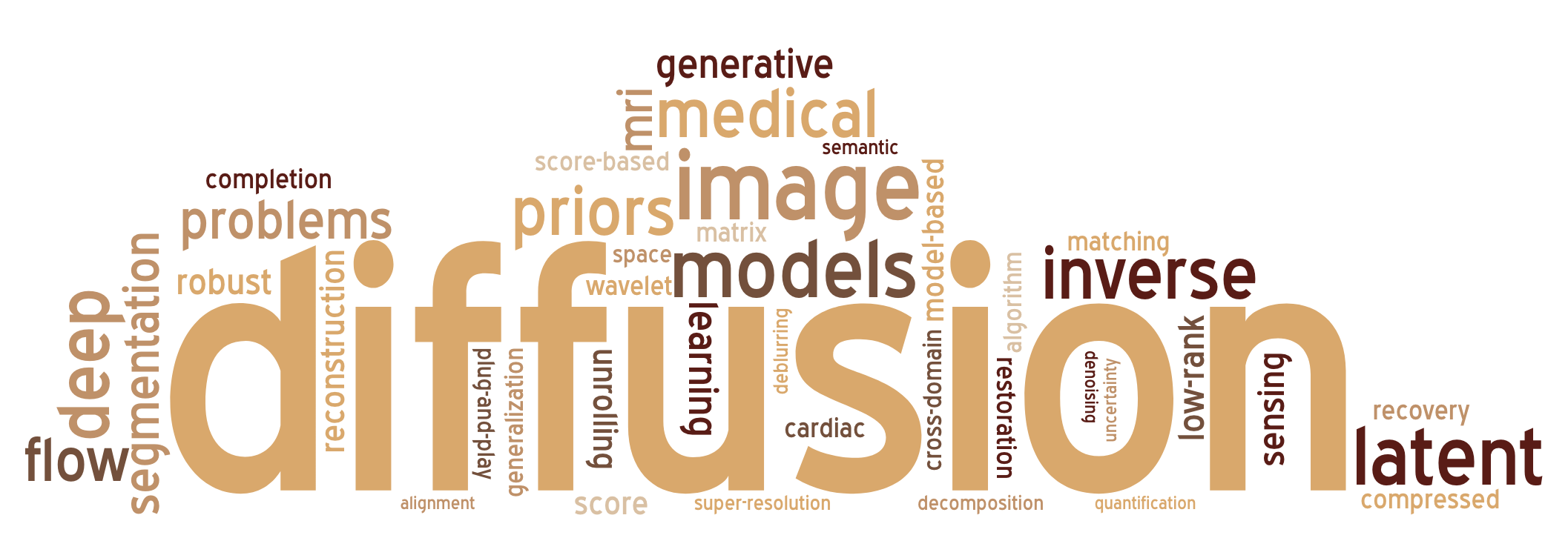

My work sits at the intersection of generative modelling, inverse problems, and computational imaging — with a particular focus on making powerful models run efficiently in resource-constrained, clinically-relevant settings. I am interested in both the theoretical foundations and practical deployment of these methods.

Problems I Think About

- Designing efficient generative models that avoid reliance on large pretrained backbones for downstream tasks

- Inverse problems: image reconstruction, super-resolution, deblurring, compressed sensing

- Model-Based Deep Learning: algorithm unrolling, unfolded optimization networks

- Robust estimation under heavy-tailed / impulsive noise or ill-posed inverse problems

- Structural priors in matrix/tensor completion or generative modeling such as low-rank or sparsity

Applications I Care About

- Medical Imaging: segmentation, classification, uncertainty quantification

- Image restoration: artifact removal, denoising, inpainting

- Generative modelling in data-scarce, unsupervised/semi-supervised settings

- Signal processing when dealing with Principal Component Pursuit (PCP) style problems

Research Experience

Summer 2024 – Present · ATP Lab, Electrical Engineering Department, LUMS

An ongoing research assistantship where we systematically cover diffusion-based methods for inverse problems — BIRD, DPS, Blind-DPS, medical imaging — MedSyn , SDSeg, SSDiffRecon —, and computational imaging settings — ControlNet , StableSR, DiffBIR — and more. On the practical side, this culminated in Wave-GMS (ICASSP 2026): a ~2.6M-parameter generative segmentation model trained entirely on a consumer GPU (RTX 2080 Ti, 12GB VRAM) that achieves state-of-the-art cross-domain performance.

Spring 2023 – Spring 2024 · Senior Capstone Project, Electrical Engineering Department, LUMS

Explored Model-Based Deep Learning through the lens of algorithm unrolling — bridging classical iterative solvers with trainable deep networks. Began with a reading course in High-Dimensional Data Analysis and Compressed Sensing (Wright & Ma), self-taught Duality Theory and the Augmented Lagrangian Method (ALM), Proximal Gradient Methods, Convex Relaxation Techniques and applied these to the Matrix Completion (MC) problem.

- Implemented popular deep learning algorithms from scratch (GitHub) and replicated key MC baseline results (GitHub)

- Built ConvMC-Net: an unfolded ALM network for standard matrix completion (GitHub)

- Proposed ConvHuberMC-Net: an unfolded M-estimation-based network for robust MC under impulsive / heavy-tailed noise (GitHub)

Sept – Dec 2023 · Research Assistant, Electrical Engineering Department, LUMS

A semester-long independent project compiling rigorous yet accessible notes on fundamental mathematical inequalities and their applications in data science and information theory. Materials were drawn from journals, conference papers, and lecture resources; each inequality is accompanied by background, a proof, key considerations, practical applications, and a Python/MATLAB demonstration.

- Covered inequalities from concentration inequalities and information-theoretic bounds to optimisation tools central to ML

- Example: Jensen's Inequality — proof, applications to KL divergence, convex optimisation, and Generative Adversarial Networks (GANs)

- Full collection available on request via Dr. Hassan

2022 · Research Assistant, Networks Systems Group, Computer Science Department, LUMS

Built a digital literacy assessment app as a sequel to a published study on validated digital literacy measures for low-internet-experience populations (Ali, Raza, Qazi — Development Engineering, 2023). The app evaluates a user's digital literacy score (0–1) from a structured questionnaire.

- Self-taught the Shiny framework in R and explored model deployment techniques within it

- Deployed a Random Forest classifier as the scoring backend, tuned on validated survey responses

- Designed for communities with limited prior technology exposure — simple onboarding, low-bandwidth usage